ZNZ Symposium 2023 - Controlling Prosthesis with Motor Units

14 Sep 2023This year at the ZNZ symposium, I’ll be sharing one of the project ideas I’m currently tinkering with. I don’t have all the results yet; it’s a work in progress.

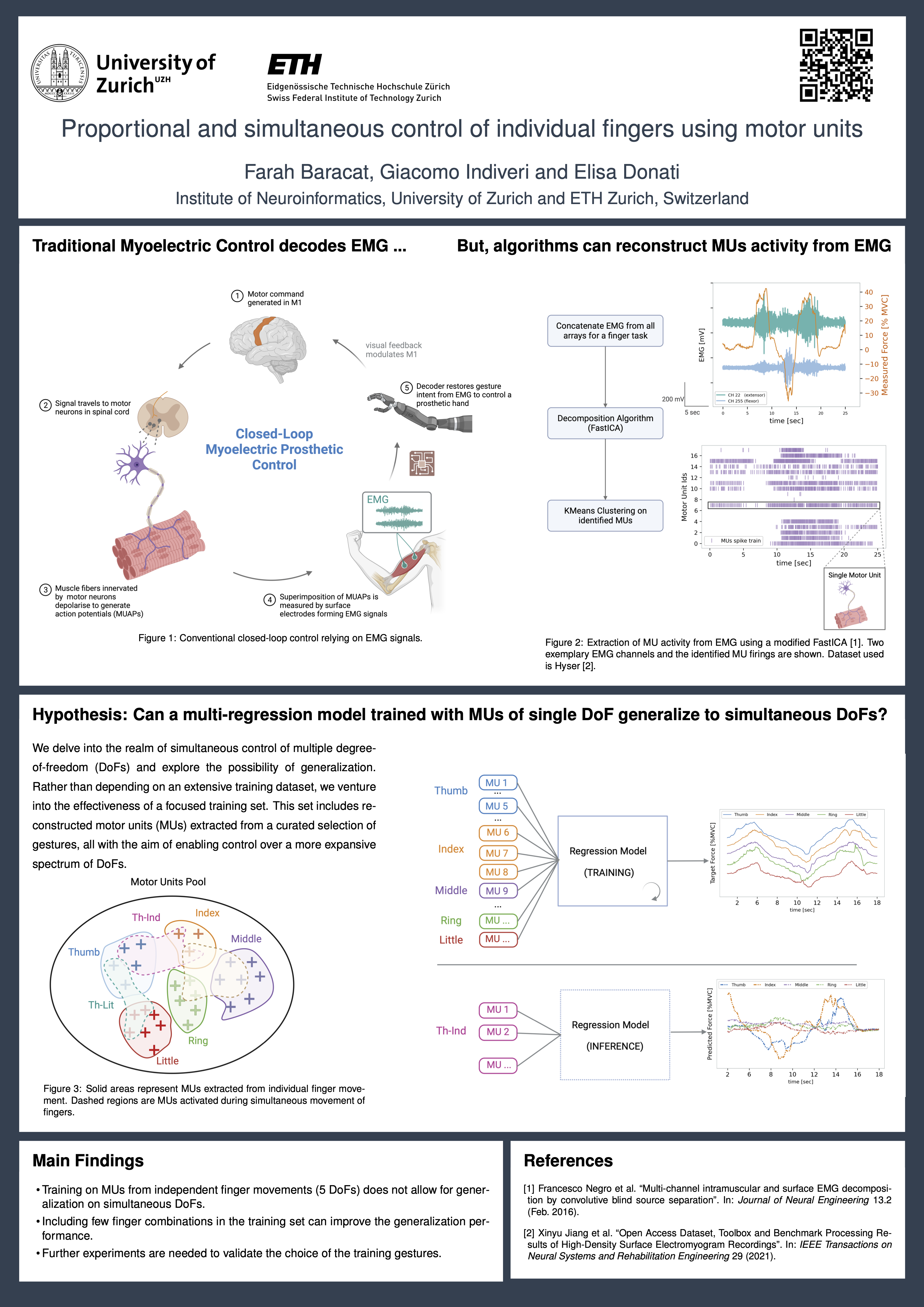

We’re exploring the idea of extracting spinal motor unit activity from surface EMG and using their spiking patterns to control and decode intricate hand movements. This in itself is not a new idea. The novel part in this project is about how to decode multiple degrees-of-freedom simultaneously.

One of the key challenges in the field is the ability to robustly decipher individual finger movements. The problem is in the amount of training data needed to do that…Let’s think about it, we have 5 fingers :D and we can move them in any combination possible (mostly). If we want to capture all of those variations, we would need a dataset with fingers moving in every possible way. This is very tedious and well…impractical. In this project, I am trying to look for shortcuts. In other words, what is the smallest possible set of gestures I can have in my training data that allows me to decode all other combinations.

Why do that? I know what you might be thinking, why not decode EMG directly right? :D

WELL…Basically the hypothesis is that by reverting to a neural space (by reconstructing those motor unit activity), we are dealing with a more stable representation of the motor intent which we can leverage to design fine controllers. The EMG signal has many limitations:

- EMG is only a global indication of the movement. Extracting finer finger motor intentions becomes very challenging.

- EMG suffers from amplitude cancellation. Previous work has shown that we are unable to decode small changes in muscle forces (e.g in the range of 10% of your maximal voluntary contraction).

Poster design Philosophy

This year I tried to reduce the amount of text in the poster and focus more on the visuals (which I created with the faboulus BioRender, only came across it recently).

Poster Abstract

Myoelectric interfaces have transformed the field of neurorehabilitation and prosthetics by allowing individuals to control artificial limbs using surface electromyography (sEMG) signals. Yet, achieving simultaneous and proportional control over individual fingers remains a challenge. We present a framework for training a robust finger decoder by leveraging the activity of alpha motor units (MUs) extracted from sEMG signals. MUs, the basic functional units of muscle activation, provide accurate information about the motor task. Our approach addresses two key challenges: the lack of precision in the current decoding approaches and the need for large training datasets.

To overcome these challenges, a decomposition algorithm extracts MUs from the sEMG signals. Next, a linear regression model predicts the contraction force associated with each finger. Additionally, we investigate the potential for generalization using a limited training set consisting of a small set of gesture combinations to control a broader range of degrees-of-freedom (DoFs).

By leveraging MUs and a limited training set, this work aims to enhance the precision and expand the control capabilities of myoelectric prosthesis. Findings contribute to practical implementation of simultaneous and proportional control over individual fingers in neurorehabilitation and prosthetics.